Benchmarking Simulation Software: How One Molder Did It

Injection mold simulation has become a standard tool for molders and mold designers.

Injection mold simulation has become a standard tool for molders and mold designers. Like any tool, it should be appropriate to the job at hand and it should be comfortable to use. In those regards—as well as others, such as cost—there is room for individual choice.

But how is a molder to select from the handful of competing simulation software packages? They are big, complex programs that take time to learn well enough to make comparative judgments. Given the substantial investment involved, and the great potential value of simulation to a molder’s bottom line, it is not a choice to be taken lightly.

One molder, MGS Mfg. Group in Germantown, Wis., undertook a thorough evaluation of three commercial simulation packages and benchmarked them by comparing simulation of the same parts. It’s a rare example of such a competitive evaluation—or at least of the willingness to discuss it in public. Even molders who don’t have the resources to emulate the MGS approach can gather valuable tips from its experience that could be applied to their own circumstances.

AN 18-MONTH PROJECT

MGS is a 29-year-old company involved in moldmaking, custom injection molding, and special hardware for multi-shot molding. MGS has around $153 million in revenues from eight plants in the U.S., Mexico, and—since June—in Ireland.

The simulation evaluation project was handled by Kevin Klotz, sr. project engineer for simulation services in the MGS QA department. In the fall of 2007 he was a new hire—for the second time, having previously worked at MGS as a project manager for two years. Most important, he had ample experience in mold simulation/analysis, having performed simulation services for the Plastics Technology Group of Allen-Bradley/Rockwell Automation.

In 2007, MGS was all set to buy a simulation package from Moldflow, the best-known brand in North America. MGS already used the simpler Moldflow Advisers software for quick upfront moldability analysis, and was considering moving up to the full-featured Moldflow Insight package.

But before taking that step, MGS management asked, Why not consider the others? If we’re going to invest all this money, let’s do our homework and make sure we buy the best package for our needs.

Klotz was assigned to examine the alternatives and to develop objectives for choosing between them. “We expected the whole project to take three to six months,” he says, “but it ended up taking almost 18 months from the day this began to the day we fully implemented our software choice. Fortunately, MGS management saw the value in this exercise and devoted an engineer to the task for the duration.”

Klotz began work in mid-2008. He spent a few weeks doing online research and talking at length to other simulation users and to software vendors. He wanted to know about software capabilities, cost, maintenance, and ease of use. He also wanted to know whether the software was suited not to just general injection molding but to highly engineered, complex parts and tooling and to MGS’s specialty—multi-shot molding.

Klotz initially looked at all four of the major packages available in North America: Moldflow (now Autodesk Moldflow), Moldex3D from Coretech of Taiwan (moldex3d.com), Sigmasoft from Sigma Services of Germany (3dsigma.com), and VISI-flow from Vero Software. Before long, Klotz decided he couldn’t effectively evaluate more than three, so he narrowed the field to Moldflow, Moldex3D, and Sigmasoft.

Klotz gave those three vendors CAD files of three plastic parts that MGS had molded and asked each of the vendors to run complete 3D simulations of each. After two weeks, during which Klotz was in close touch with simulation engineers at the three software vendors, each vendor came to MGS to present its results.

Klotz says that filling, packing, and cooling results from all three vendors correlated well with MGS’s real-world experience. Where they differed was in the warpage predictions, especially for glass-reinforced parts. “We’ve been burned many times by problems related to part distortion, so this was a high priority for us,” Klotz explains. “We value accurate warpage prediction because many of our tools are very complex and can be expensive to modify.” One candidate was significantly closer than the others in predicting warpage of parts that MGS had documented from prior experience. Still, no clear and absolute winner had emerged. “We had discovered that each package had unique and attractive modular offerings and good performance capabilities. We also discovered that each package had weaknesses. Further review was deemed prudent before a final choice could be made.”

WHERE DOES SIMULATION FIT?

“While all this was going on,” says Klotz, “we were thinking about how we would implement simulation once we decided on a purchase. How could we use it best for the greatest effect? What CAD solid modeling packages were best suited to each simulation candidate? What was the best method for importing models and exporting results for our eventual choice of simulation package? What were the potential traps that we could fall into with each of the simulation programs?

“It took six months of discussion to decide where simulation belonged in our organization. If applied properly, simulation could help solve problems at every stage of a project. It can help in part design, material selection, tool design, and even processing. In the end, we decided that the one organizational function tied into all of those stages is Quality Assurance. So we placed simulation in the QA Dept., where I now work.”

After that initial benchmarking phase, Klotz engaged in more discussions with engineers at other companies who were experienced in simulation. He could not find anyone that had extensive experience with all the software candidates. Some had experience with two, but not all three. However, their experience largely matched what MGS had learned from the early benchmarking.

“We needed to test-drive the candidates,” Klotz concluded. “We couldn’t be sure unless we had experience in using the software ourselves. There were big differences among the programs in how the analyses were set up and meshed and how long they took to run. Those were important considerations because, for example, sometimes we have only a few days to a week to design a tool. In such a situation, if the simulation took two to three days, it wouldn’t meet our needs.”

That became Phase II of the benchmarking project. Klotz already had experience with Moldflow, so he invested three months to learn to work with Moldex3D and another three months to do the same with Sigmasoft. “I knew I wasn’t going to become a pro at using these packages in just a few months, so I was careful to use the same new parts while evaluating the software.”

Klotz practiced with several parts that MGS had molded. “I learned how models are built in each software product, how they are meshed, and about the merits of different meshing techniques. I learned about their post-processing capabilities and how they generate reports.” In comparing results from simulating different parts, Klotz evaluated the accuracy of results, ease of use, simulation time, software cost, and ease of implementation in the MGS business structure.” “I also looked at who had the strongest development group and what they had done to develop their software over the last five years.”

Klotz identified certain merits and weaknesses in each candidate. One, for example, offered a variety of meshing styles while another did not. Was that an advantage or was it a limitation to be investigated? This and other questions arose during the evaluation and could only be answered through real-world part simulation at MGS.

Additionally, Klotz gained first-hand experience at mesh cleanup, model import/export challenges, material database manipulation, and post-processing results interpretation, presentation, and report generation with the various programs. Computer hardware became an important item of discussion due to the processing demands of 3D mesh techniques. A more powerful computer was purchased as a result of the review.

“I am confident that any of these simulation programs could have been implemented successfully at MGS,” Klotz concludes. But after weighing all the factors, MGS chose Sigmasoft in May of 2009. “We still use Autodesk Moldflow Advisers for early product reviews,” notes Klotz. “In 15 minutes, it can help us screen gate locations, fill patterns, gas traps, and pressure to fill. But if we pursue a more in-depth review of a part or tool, we use Sigmasoft.”

Klotz keeps current on development of the other software programs through contacts with other users. He expects that over time these vendors will “leapfrog” one another in capabilities. He has also developed relationships with outside analysts that MGS uses on a consulting basis. For example, MGS called on an outside service bureau to use Moldflow simulation for an injection/compression application and used another service source to simulate a gas-assist application in Moldex3D. These simulation functions are not yet available for Sigmasoft.

HOW SHOULD YOU DO IT?

Klotz offers this advice to other molders who are considering a simulation software purchase: “Number one, it is important that you understand your goals for simulation within your organization. Are you looking to improve quality or reduce cost or time to market? The level of simulation capability you need is a critical early decision. By first determining your goals for simulation you can then choose the appropriate level and not waste resources.

“Many molders think of simulation as belonging to the part-design function,” Klotz notes. “But that means the recommendations developed through simulation don’t always make it down the line to tooling and processing groups. You want the benefits of simulation to reach every stage of product development.”

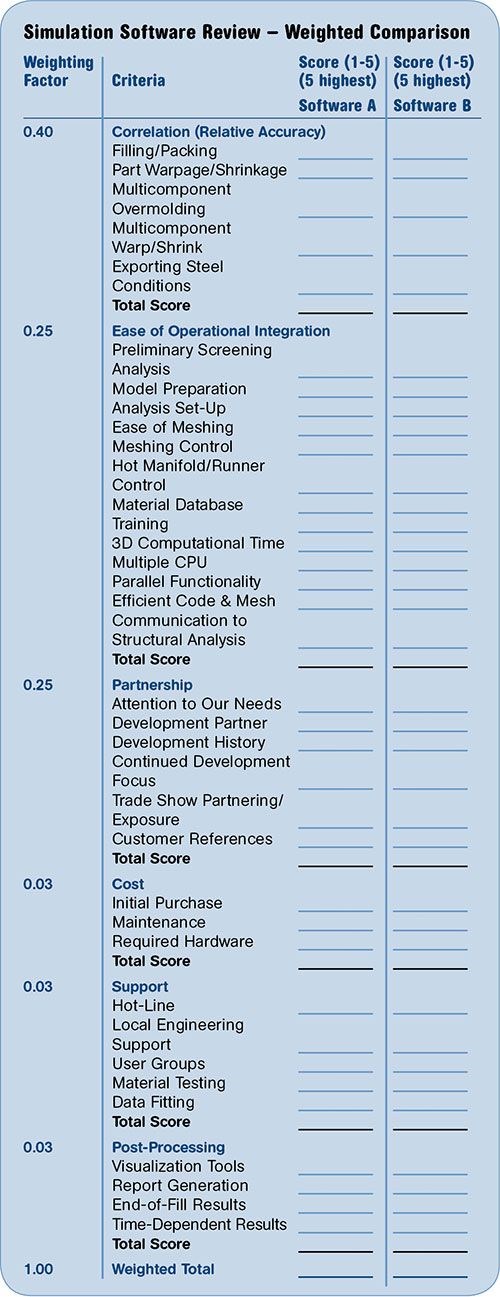

Klotz developed the accompanying Simulation Software Review sheet to help prioritize and evaluate all the criteria that MGS applied to simulation software. He weighted the various factors according to his estimation of their relative importance to MGS. Other molders obviously might weight them differently.

Second, Klotz advises molders, “Give your criteria to software providers and ask them to demonstrate how their product and organization would satisfy those needs. For example, if warpage predictions are important to you, give the software vendors one or two product designs and compare their warpage predictions to your reality.”

“Third, communicate with other simulation users. Ask about ease and simplicity of model building and meshing—steps that can take a great deal of your time. Ask how long it takes to convert a solid model into a simulation model. How fast can you obtain a good-quality mesh? How easy is it to modify a model and mesh when considering ‘what-if’ scenarios? How easily can a simulation analyst produce a useful report?”

By useful he means a report that serves as a good communication tool throughout the organization so product and tool designers and processing specialists can understand the relevance of the results and their potential impact on their activities. “For example, 30 pages of colorful simulation images with very little text to explain what the images mean to a tool designer or processor is ultimately of very little value.”

Fourth, hire a trained simulation analyst. Trained, that is, in part and mold design and processing, not just in using the software. “Simulation is a mathematical approximation of a very complicated manufacturing process. Always remember that simulation is just a tool. It doesn’t make decisions —people do. Simulation provides information on which a knowledgeable person can base decisions with greater likelihood of success.

Putting it another way, simulation does not guarantee quality in molding. A quality molded part is the result of the cumulative effort of a team of capable people. Simulation can be a valuable tool for that team if the results can be interpreted by members of the team.”

When comparing simulation programs, Klotz says, you will likely be looking for one that gives the most accurate results. “But it is important to determine the level of accuracy you need and in what time frame. Inclusion of finer tool and process details often leads to more accurate final results, but the additional time investment may be more costly than the benefit received.”

Valuable as it is, MGS doesn’t use simulation on every job. “Sometimes the project budget and time frame won’t allow for it,” says Klotz. “Sometimes the cost of simulation can make or break a sale. For a customer to truly understand the savings possible with simulation, they have to assign a value to problem avoidance. That can be one of the hardest parts of selling the need for simulation.

“And simulation is not always necessary. If, for example, the part is relatively simple or similar in design to ones with which we are familiar, we’ll rely on prior experience. But if a part is very complex or uses an unfamiliar material—that’s where we’ll use simulation to help resolve potential problems early on.”

Klotz feels that all simulation programs have improved considerably in recent years at the basic functions of modeling, meshing, and analysis of filling, packing, and cooling. Still, at any point in time, one package may outshine another in its ability to handle more complex levels of simulation like multi-shot or insert overmolding, gas assist, injection/compression, etc.

Among areas of simulation technology that Klotz feels still need the most work, one critical area is material databases. He says they are most often not accurate or complete enough to accurately predict shrinkage and warpage. This is especially true for crystalline and glass-filled materials. “Regardless of the software used, poor material data virtually guarantees an equally poor simulation result,” he warns.

He also sees a need for better estimation of the probability of defects occurring in a molded part. “Simulation will show with great accuracy where you can expect a sink or a void. But will it be a sink or will it be a void? And if it’s a sink, how visible will it be in the molded part? That’s up to the analyst’s interpretation.”

Which leads him to the last item on his wish list—that software providers direct their development teams toward making their post-processing results as intuitive to the analyst as possible.

Related Content

Are Your Sprue or Parts Sticking? Here Are Some Solutions

When a sprue or part sticks, the result of trying to unstick it is often more scratches or undercuts, making the problem worse and the fix more costly. Here’s how to set up a proper procedure for this sticky wicket.

Read MoreHow to Stop Flash

Flashing of a part can occur for several reasons—from variations in the process or material to tooling trouble.

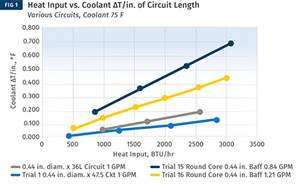

Read MoreImprove The Cooling Performance Of Your Molds

Need to figure out your mold-cooling energy requirements for the various polymers you run? What about sizing cooling circuits so they provide adequate cooling capacity? Learn the tricks of the trade here.

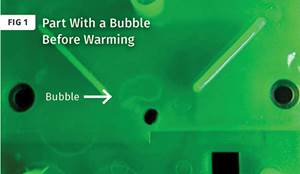

Read MoreHow to Get Rid of Bubbles in Injection Molding

First find out if they are the result of trapped gas or a vacuum void. Then follow these steps to get rid of them.

Read MoreRead Next

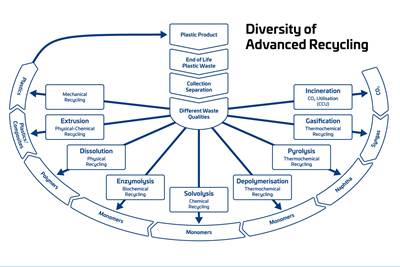

Advanced Recycling: Beyond Pyrolysis

Consumer-product brand owners increasingly see advanced chemical recycling as a necessary complement to mechanical recycling if they are to meet ambitious goals for a circular economy in the next decade. Dozens of technology providers are developing new technologies to overcome the limitations of existing pyrolysis methods and to commercialize various alternative approaches to chemical recycling of plastics.

Read MoreProcessor Turns to AI to Help Keep Machines Humming

At captive processor McConkey, a new generation of artificial intelligence models, highlighted by ChatGPT, is helping it wade through the shortage of skilled labor and keep its production lines churning out good parts.

Read MoreHow Polymer Melts in Single-Screw Extruders

Understanding how polymer melts in a single-screw extruder could help you optimize your screw design to eliminate defect-causing solid polymer fragments.

Read More